Introduction

Salsa is committed to highlighting great digital case studies from around the world, including highly technical pieces. This post investigates Ask GeorgiaGov project, a great example of digital innovation in the public sector.

The presentation started with some introductory words from the host Sarah Anthon, who’s a part of Acquia’s Business Development team. Sarah gave a quick overview of Acquia and how the company helps organisations to overcome the challenges of the modern and fast-paced world of digital experiences. Shortly after, she handed over to the main presenter Preston So.

Preston is the director of Research and Innovation at Acquia and leads Acquia — a team that delivered the “Alexa, ask Georgia” project in close collaboration with Digital Services in the US. He briefly described the mission of Acquia Labs, which is to partner with the clients to push the envelope when it comes to the emerging technology such as augmented and virtual reality, Conversational interfaces, beacon technology, geolocation and many others.

The presentation plan was logically separated into a few different sections:

Motivation behind the Ask GeorgiaGov project

Historical context on what led to the creation of the product

Product strategy

How it works, both from technical and non-technical points of view, and the role of Drupal

The impact and outcomes

Motivation

Preston said: “One of the things that is most interesting about state and local government, especially in the United States, is that there is a very strong focus on a well-educated and well-informed citizenry.”He explained that the key to achieving this is to be more agnostic to the devices and technologies that those citizens are using and provide them with inclusive and accessible content.

Georgia has been a very progressive state in the field of omni-channel content strategy, allowing its citizens to connect with the information in unique ways. But what led to that was the previous discovery of the channels that people use to interact with the government.

One of the examples of such progressive thinking is that Georgia was the first state to offer a text-to-speech service — a technology that allowed all text-based websites’ content to be dictated to the users on demand. This opened up a new line of communication between the government and the people, thus clarifying the importance of innovation and providing inspiration for more advanced services that cover a broader range of channels.

The team has quickly learned that users like to be independent and to have the ability to switch across different channels, whether it is a computer, smartphone, Amazon Alexa or any other device.

“A lot of the paradigms and principles that we have been used to over the course of the last few years are being completely shattered by the rapid changes in technology,” Preston said.

These changes are driving the re-invention of content. Firstly, we have to now re-think the way the content is written, the way it is strategised and even the way it is architected. Secondly, we need to make sure that the content is accessible, often in ways that we haven’t thought of before.

Digital Services is the state agency that governs technological implementations, development and various projects in the digital space. One of the things that they’ve tried to do is innovation as a byproduct. This means that by bringing new projects to life they like to discover new innovative experiences that were not part of the initial goal. So far, this has allowed them to not only deliver planned services but also stay up to date with technology and collect new ideas, such as conversational experiences that they offer today.

Content experience was identified as one of the most influential areas to focus on as it really should to be what drives the users. Not a design, not anything else, but the content itself has to be crafted in such a way that it provides the most value.

As Preston explains, websites are no longer enough. There is an urge to move to other paradigms, where text-based information is just one of many ways to convey the information.

In the case of Georgia, and often government in general, the people usually weren’t (or still aren’t) in control when it comes to retrieving information. For instance, one of the biggest issues is that often people are stuck on the phone lines trying to get through to someone who can answer their questions. Another example would be poor content and confusing information architecture that sets up a massive barrier. These issues also often overlap, which further complicates the matter.

Stepping into the conversational space was viewed as a solution to bring citizens back in control of their experiences.

What is Ask GeorgiaGov?

Simply put, Ask GeorgiaGov is a new Alexa skill that offers citizens of Georgia the ability to ask questions about popular topics like “How to renew my drivers license”, “How to register a vote”, “How to obtain a nursing license” and even “Who is the governor of Georgia?”

Georgia state had three value propositions for the project:

Getting information to citizens faster, anywhere and at any time without the frustration of waiting for the answers

Widening access to the information

Upholding the spirit of open-source innovation, giving back to the community

Product strategy

In discussions with the Digital Services Georgia team there were three main strategic points outlined:

Build towards a single source of truth — One of the biggest problems in government is insufficient budgets, which translates into an inability to hire personnel and resources to handle many different channels of content. The project aimed for an optimal and centralised source of content, which would have no differentiations regardless of the platform or technology.

Reach users where they are, independent of their abilities or socioeconomic status.

Leverage open source wherever possible.

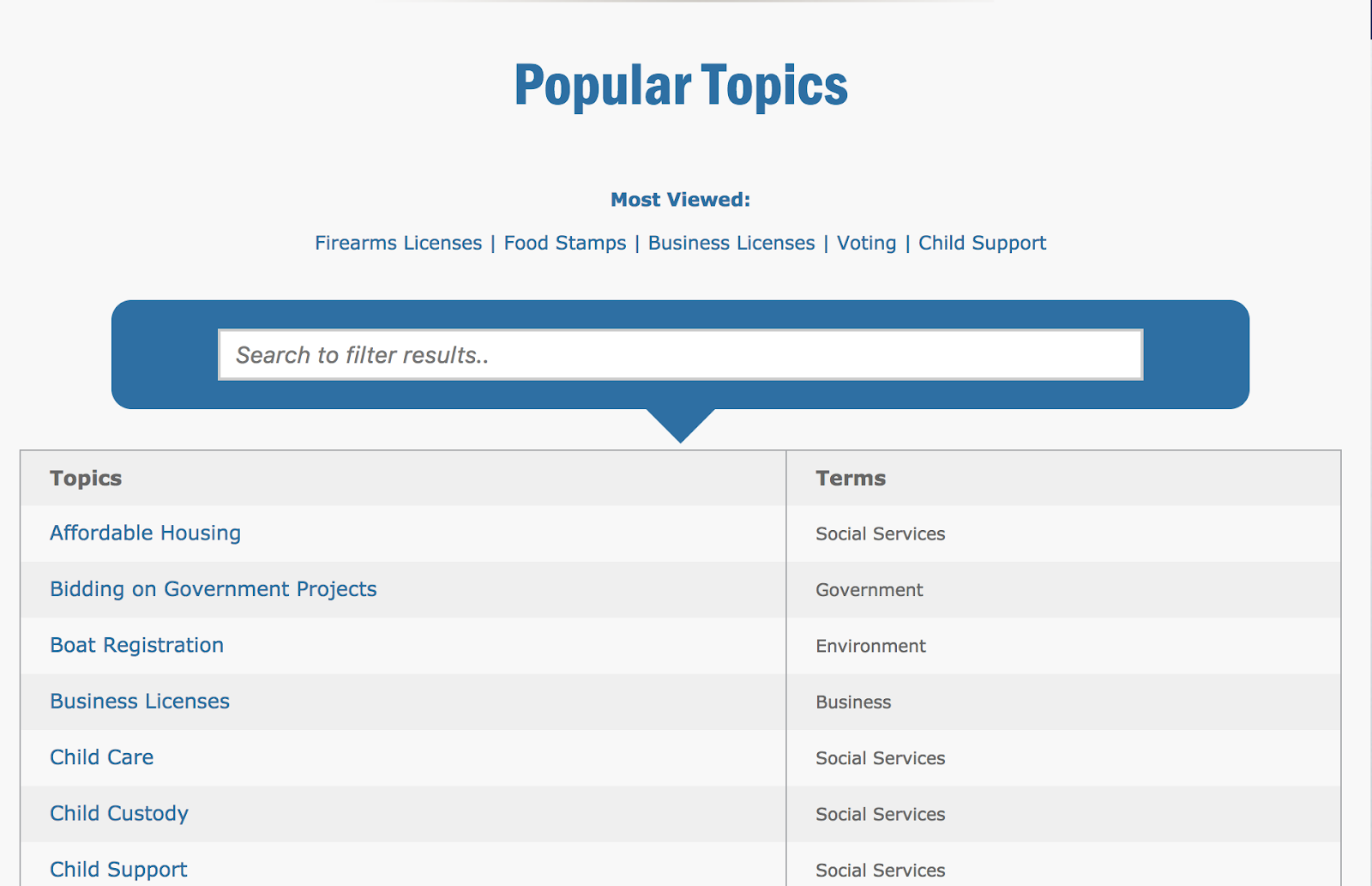

If you go to the website right now, you’ll find a list of 52 different topics that all have their designated pages. All of this content is written in the form of frequently asked questions. This provided a great foundation to build on for the conversational experience as it already had content that was easy to find and access.

And if you look at some of the individual pages, you’ll notice that each of them has a “What You Should Know” section that features related information on a chosen topic.

One of the interesting things about this kind of format is that frequently asked questions are actually an optimal source of information for conversational technology because they are written in a conversational way.

How it works

To answer the question of how how Ask GeorgiaGov works, you first need to know how Alexa works.

Amazon Alexa is a hardware voice assistant interface, and its ability to be extended is controlled by Amazon. Unlike its competitors, Alexa is actually programmable and customisable to a great degree. If you want to program a new skill you could use a specially created set of tools Alexa Skills and then integrate them with a system of your choice, like Drupal.

Alexa comes with a pretty rich set of skills out-of-the-box, which can be used as a baseline for creating new customised skills in the form of plugins.

Before diving into technicalities, the main capability of Alexa lies in capturing speech, transforming it into a machine language, and passing it to a processor that understands that same language. The response then travels back through the same flow, with Alexa generating the voice response.

There are three components to each Alexa skill:

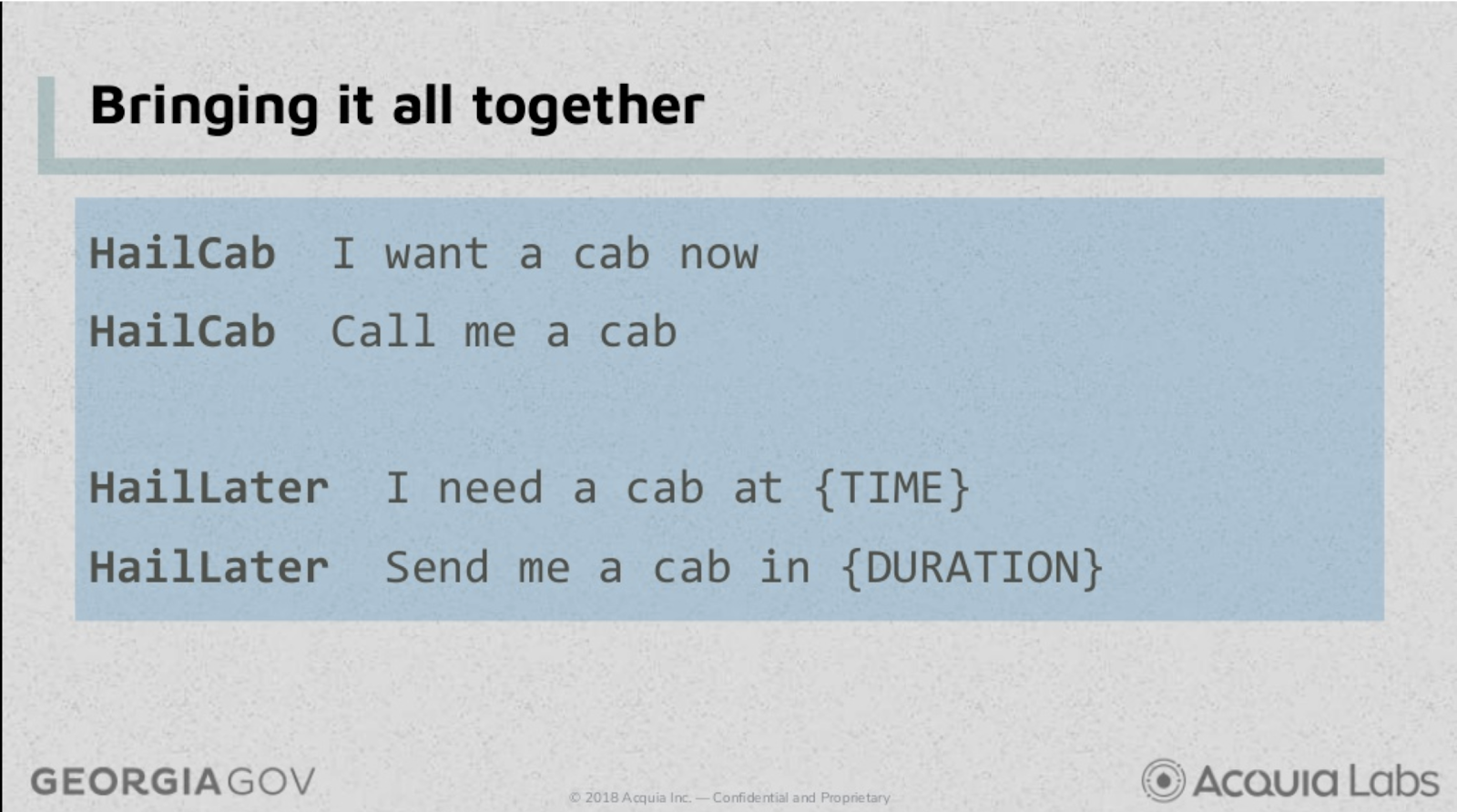

Intents — An operation or request that will be initiated by the user in a conversation. Quite literally, the intent that the user holds during the conversation. Some of the examples of intents would be “Hail a cab”, “Order a coffee”, “Order fish and chips” etc. These are the tasks you want Alexa to perform.

Utterances — Example phrases that help Alexa to “learn” and understand the different ways users can ask for the same thing. These are usually the variations of the intents that are tied back to the original intent. For instance, “Call a taxi” and “Call a cab” utterances would be recognised, and Alexa will learn to trigger the same original intent.

Slots — The words that you can fill the information with to provide some parameters to your intents, for example “Call a cab at 5PM” or “Order a pizza to the office”. These are the variables for the utterances that can capture various data such as date, colour, city name, car model or any other related piece of information that you want to pass with your request.

Below is an example of how Alexa interprets a request:

Alexa will understand that there is an intent “HailCab” from the utterances on the right-hand side. However, if you provide some extra information in your utterances then Alexa might tie them back to a variation of the intent “HailLater” that accepts parameters (slots) and has extra functionality to satisfy the request.

The Alexa skill that was created for the Ask GeorgiaGov project defines custom intents and utterances for:

Getting a user’s question (interpret what the user needs)

Finding out if the user wants related information

Getting a phone number the user can call if more information or action is needed

Once captured, Alexa packages this information into a standardised format and sends it off to a processor. This is where Drupal comes into picture, acting as an Alexa endpoint to process the request and respond with the relevant information.

Drupal sports a custom module that is using an underlying open-source PHP library that enables the system to understand the machine language of Alexa. When a user question is received, Drupal transforms it into a slot data and performs a search against indexed site content.

So, in its essence, when you have a conversation with Alexa using the Ask GeorgiaGov skill, you are triggering several things.

First, you are conducting a search across those 52 content pages to get the most relevant match to a particular topic. Next, you also get related information that allows you to ask additional questions or drill into specifics of the topic if needed. And finally, at any point of the conversation you can ask Alexa for a phone number to call.

At this point of the presentation, Preston showed a video of a Georgian citizen asking Alexa how to renew her driver’s license and receiving relevant responses via a dialogue.

The impact and outcomes

The team stumbled on several issues and challenges that they didn’t expect. On the upside, they also discovered some great impacts that reveal the breadth of the conversational space.

Content that we consider to be web-based (or webpage-driven if you like) is fundamentally very different from conversational content. Web content is predominantly prose, relies heavily on visual context and linking, and has a high verbosity tolerance. Preston elaborated on the latter, explaining that a user might read an article with thousands of words and not feel much disengagement or fatigue, often scrolling through irrelevant sections and performing a speed reading.

In this sense, conversational content is very different and should rely on attention span of a human; delivering single, brief thoughts. It also has no user interface and is link and reference poor, taking away that visual component that we’re so used to in web-based content. In addition, the traditional information architecture with all the menu links, breadcrumbs and hierarchy, simply cannot fit into the conversation landscape. Instead, a more guided experience is expected with a pre-defined flow that leads to a relevant result. There’s really no free form interaction with the content anymore.

The content strategy also needs to be different when it comes to conversation. In the web world we use things like headers, paragraphs and styles to structure and accentuate information. Needless to say, these techniques also don’t apply and have no place within the conversational environment.

There were two main rules put together by the project team when it came to content:

The editorial workflow shouldn’t be burdened by the addition of conversational content.

Editing a piece of web content and conversational content should be indistinguishable and channel agnostic.

To make sure that the content was ready for the conversational experience and omni-channel delivery, the team needed to perform a comprehensive content audit. They went through each line, each word, each title and each link of the existing content to determine how it was going to perform in a dialogue scenario, thus defining its conversational legibility. The standard question that the team used to test content was “Is this content understandable to a listener rather than a reader?” (in a similar way that it would be broadcasted on TV or radio).

The second aspect was content discoverability, which endeavoured to find out if the content in question was accessible to a listener via a unidirectional flow rather than link-rich pages.

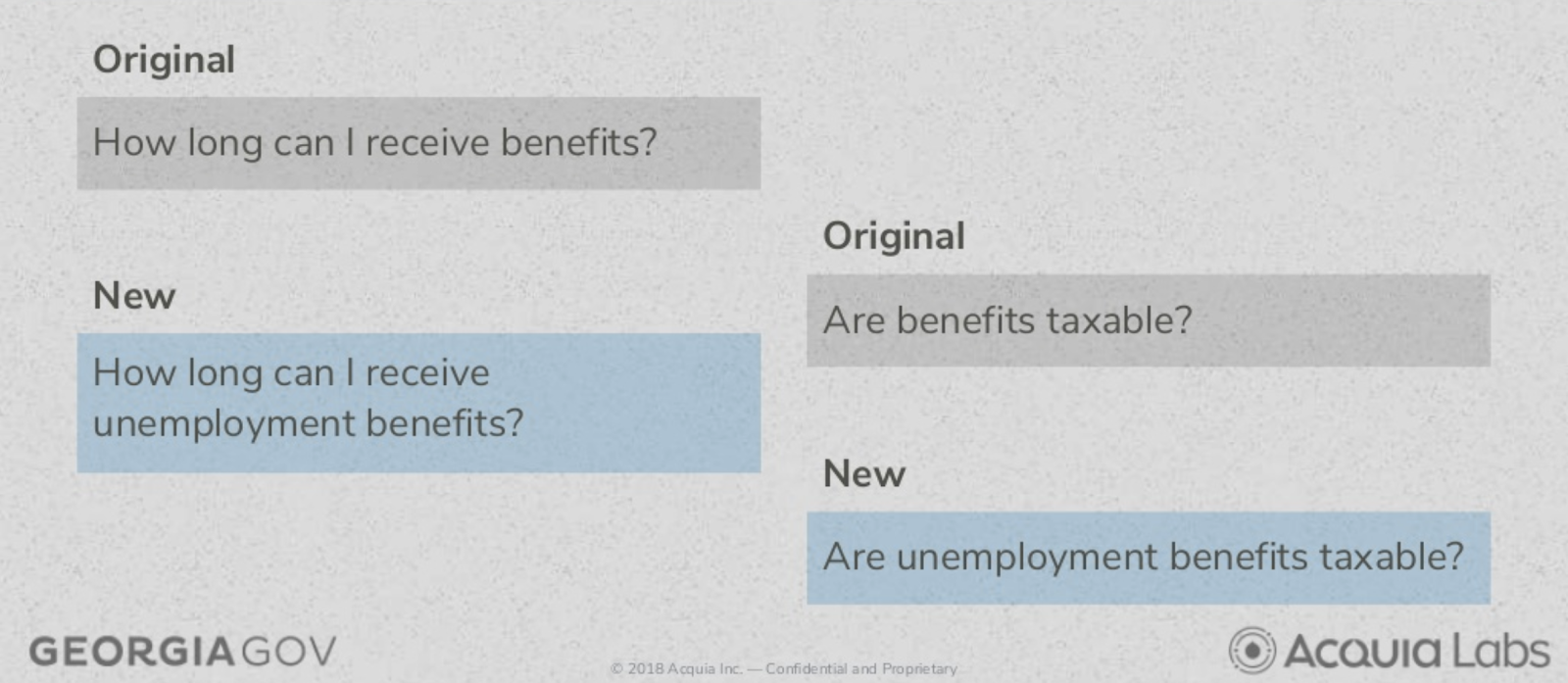

Below is an example of some of the content changes implemented to ensure content is less context reliant.

When interacting via voice you might be asking about something very specific, for example, a driver’s license: “How do I get my driver’s license?” With every subsequent question this context shouldn’t be lost. So “Where do I renew my license since it has been revoked?” would need to be understood as if you’re still talking about the driver’s license and not any other licenses that would otherwise conflict with the conversation flow. So rather than focusing on the ambiguous words, the team decided to inject more specificity into every single question. Behind the scenes, the functional logic was re-inserting those contextual words into each single question to make sure that the context persisted.

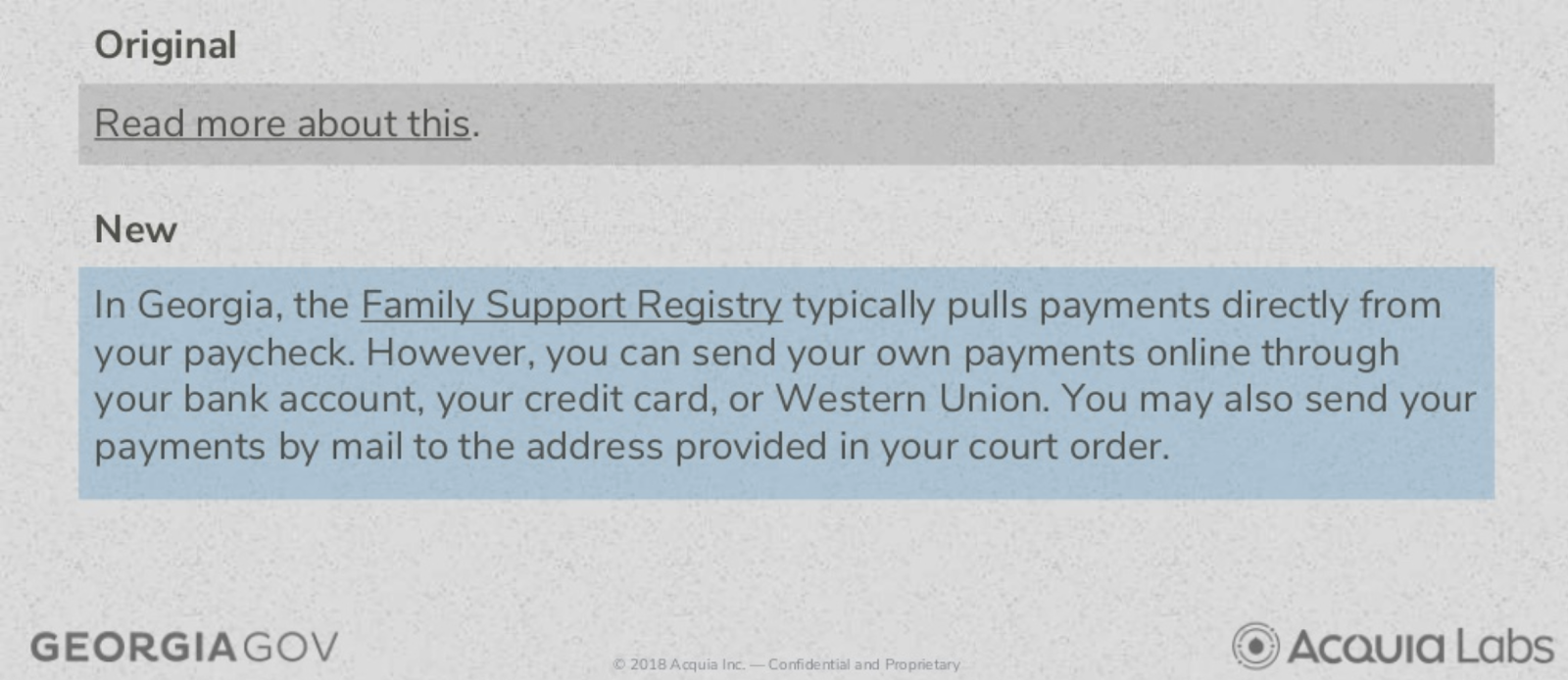

Something else to watch out for was call to actions and links, due to the absence of the visual component in the conversational experience. For instance, links like “Read more” or “Click to learn more” were not applicable in the conversational setting. This exercise actually helped to question some of those links that were pointing the users to yet another piece of content, rather than an action or an outcome.

Take this example into consideration:

The link had to be enriched with the information that provided more context, still preserving the ability to link out for the web-based users and at the same time not disrupting the Ask Georgia user experience.

As a result of the audit and alterations, the website became more adapted to its content strategy and got Georgia.gov prepared for the future and new channels.

Next, the team sunk their teeth into conversational usability, which is a very under-explored area at the moment that needs more expertise. The main reason why this topic is quite challenging is because it’s solely aural. There’s no standard way to test the usability of the aural content, akin to eye-tracking or think-aloud techniques.

As a method for conversational usability testing, the project team adopted a retrospective probing approach. This activity involves asking the users questions after their engagement with Ask GeorgiaGov. While giving some benefits of the uninterrupted and uninfluenced experience, this method unearthed some major flaws — at the end of the conversation the users often didn’t remember exactly what they said or did during the interaction, which imposed a big threat to the integrity of the collected test data.

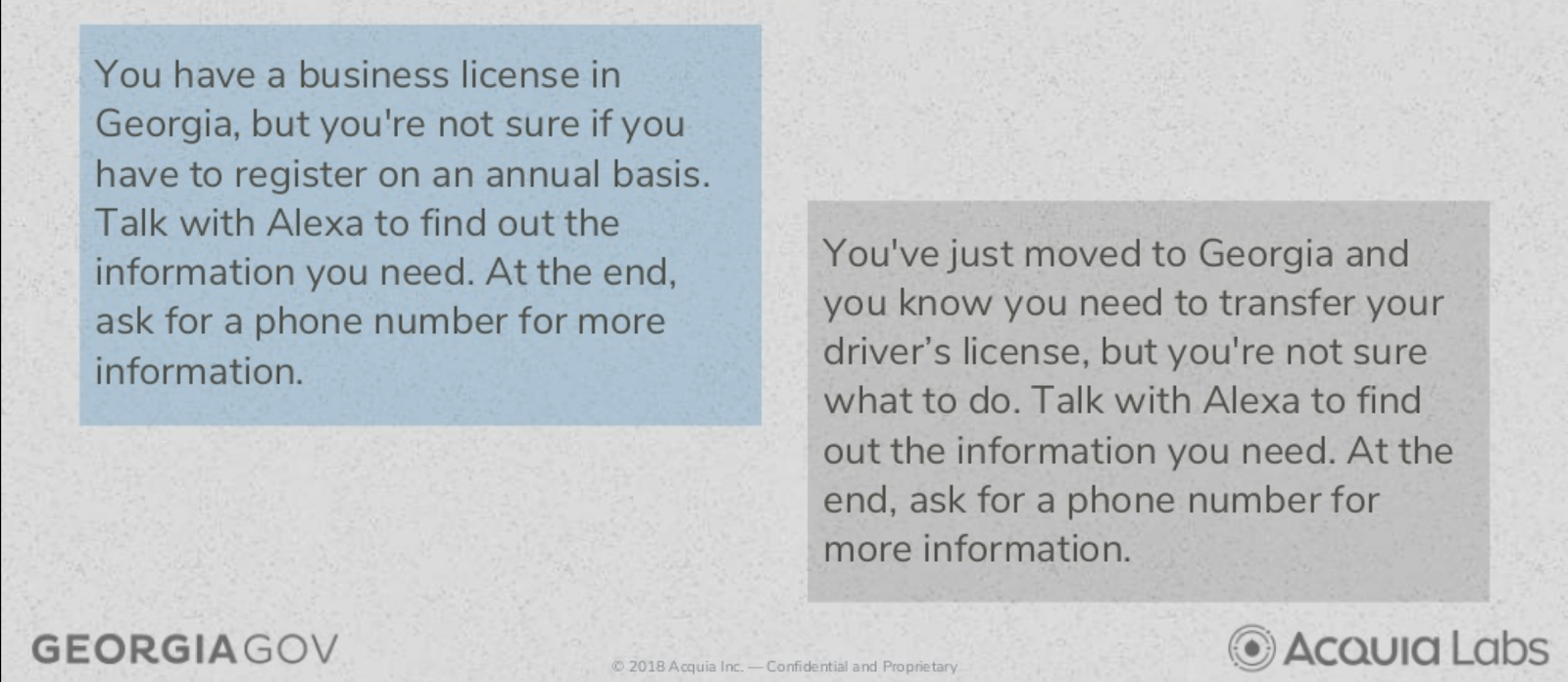

For usability testing, the testers were given a specific situation and were asked to take their understanding of that situation and engage the skill to find the information they needed, and at the end get a phone number they could call for further assistance.

As seen above, there are three components to each activity that really put the Ask GeorgiaGov skill to the test:

The topic with complication appended to the end of it

The task to interpret the topic into a set of questions to Alexa

The task to retrieve a phone number to call

The outcomes

With the technology part solved, and all the audits and testing that followed, the Ask GeorgiaGov skill was launched last year. Already, we have a good insight into its performance:

79.2% successful interactions, which is very high rate among Alexa skills

71.2% of the engagements led to an agency phone number being provided

Most popular keywords: vehicle registration, driver’s licenses, state sales tax

This allows Georgia to adapt to the demands of its citizens, truly giving them control.

The project was featured on a Georgia Public Broadcasting segment and is going to be featured on the first Amazon Alexa summit in Newark later this year.

Questions

The webinar attendees were given an opportunity to ask questions. Below as some of the most important excerpts:

Is Alexa integration available with Drupal 8? Yes. As part of the project the team ported some of the code from Drupal 8 module back to Drupal 7.

Do you use a highly structured content so that it works with Alexa? This really depends on what kind of content you’re looking at and the level of relations between that content. Some of the relations might not be useful in the conversation and would need to be somehow incorporated in the referrer content so that it can be easily searchable. There are cases when teams write multiple versions of the content to cater for different channels, including conversation, but it might not be the best approach. The fact is though, the more structured your content is, the tougher it is to write an Alexa skills that is reusable because it is tightly coupled with the content — something that the team was trying to avoid for Ask GeorgiaGov.

What sort of a team would an organisation need to deliver a similar project? The Ask GeorgiaGov project had a team of 15 developer resources working full time over the course of eight weeks. However, the reason why this was achievable in such a short period of time is that one of the developers was a Drupal architect who knew Drupal 7 extremely well and was able to write custom modules and know how to use the external PHP library that was used in the project. Also, the team had prior exposure to the Alexa Skills .

Did you find any concerns about privacy using this technology? This is a very good question and holds a big issue for some. The official guidance from Amazon is not to pass any personal identifiable information across voice interfaces with the reason being that Amazon uses every single piece of audio that has been captured to improve its algorithms in the future. For the Ask GeorgiaGov project the team refrained from implementing any features that would capture PII.

What is the support for other products like Google Home and Apple Homepod or any other future products? One note that has to be made is that conversational space is very immature at the moment and has a lot of uncertainties. Initially, it was a very locked-down ecosystem for each of the providers of these products, but they’re gradually starting to be more flexible and open. Of course, you need to understand that these providers user different technologies, platforms, ideas and approaches, meaning that what you write as an Alexa extension would not be easily convertible to other technologies. There are services like DialogFlow that try to standardise the service via its APIs but it’s not quite there yet.